There are two main ways to implement an A/B test: server-side and client-side. Most engineering teams opt to implement experiments server-side because it:

- eliminates any content flashes ("FOUC") or delays in displaying content, which hurts page speed scores like the First Contentful Paint

- doesn't require any client-side JavaScript to run the experiment

The main advantage of client-side experiments is that they can be implemented without code (especially via PostHog's new no-code web experiments, which are still in beta), meaning marketing teams can bypass the engineering team and execute experiments themselves.

Server-side experiments should have custom exposure criteria

While Atonomo recommends server-side experiments generally when possible (Atonomo delivers A/B tests with server-side experiments in pull requests), server-side experiments generally require you to manually set the exposure criteria to avoid including bots in your experiment.

This is especially true if your primary endpoint is measured on the client side, with the JS SDK. When you count all exposures, you're including crawlers and bots in your experiment. This dilutes the effect size because crawlers and bots are far less likely to "convert", especially if the conversion event is measured on the client side.

What this looks like in a real-world A/B test

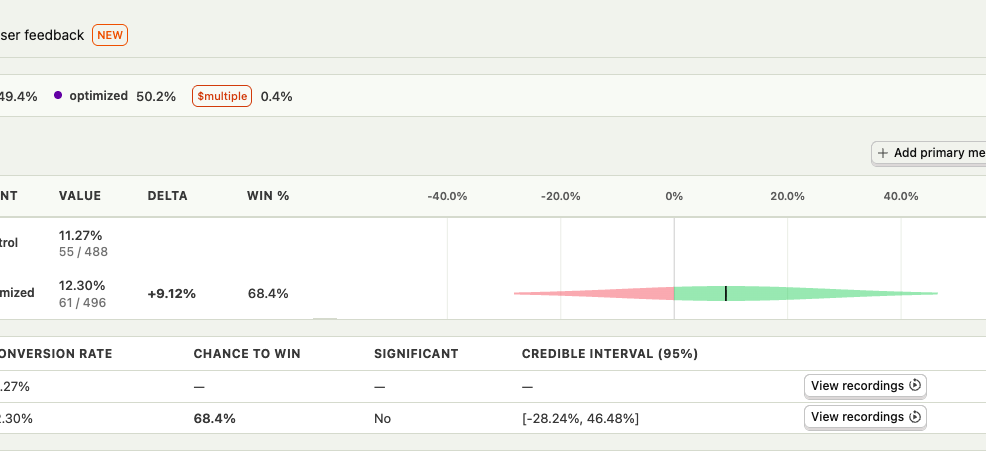

This recently impacted one of the experiments we were running for a customer. Because we hadn't customized the exposure settings, the experiment was diluted by 80% crawlers and bots, and only 20% real traffic.

This inflated the time to statistical significance and reduced the actual win probability. Once we amended the exposure criteria, a 23% uplift was revealed in a core part of their funnel.

When you dilute your actual experiment effect by 4-5x by including bots, the statistical significance calculation is off because it brings the conversion rates of all of the arms closer together (and closer to 0%). To get statistical significance faster, the goal is to maximize the difference between the arms.

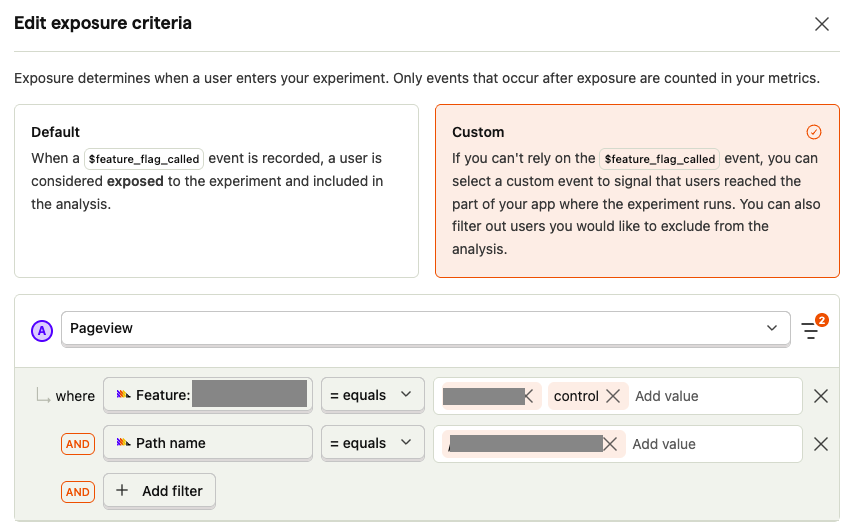

We ended up using the $pageview event as the exposure, conditioned on the path name and the

feature flag property being either assigned to control or variant.

Downside of client-side exposure criteria

The main downside of using the PostHog JS SDK to set exposure is that it doesn't account for fast bounces.

If your server-side experiment arms can impact the bounce rate before the JS SDK fully loads and sends the first

$pageview event, you're losing that information.

As an engineer implementing A/B tests, you need to consider this potential problem before deciding to reset the exposure criteria to use the server-side or client-side exposure events.

One great example of the delicateness here is Alexey Komissarouk's article about an Opendoor A/B test, where they improved load performance, and their A/B test metrics actually indicated performance was worse! This was simply because the frontend SDK ended up loading faster, meaning they stopped underreporting the bounce rate:

When the homepage loads, the front-end tracking code records a 'page view' event. If the 'page view' event was recorded, but then nothing else happens, analytics will consider that user to have "bounced".

It turned out that the old site was so slow that many folks left before their 'page view' ever got recorded. In other words, the old site was dramatically under-reporting bounces.